📉 Hacking the Curse of Dimensionality: A Guide to Data Optimization

Posted by Dev_Playground | Tagged: #AI #DataEngineering

📑 Table of Contents

1. Introduction: Finding the 'Essence' in the Data Flood

Beyond 2025, we are not just living in a data flood, but in an era of 'Data Explosion.' As the number of features an AI model must process expands to tens of thousands, engineers face a massive barrier known as the 'Curse of Dimensionality.'

Simply feeding more data isn't the solution. Dimensionality Reduction is the key to a successful data diet. By removing noise and discovering the 'true manifold' of the data, this technology is the only key to drastically improving model latency and preventing overfitting.

2. Core Mechanisms: Two Strategies for Compression

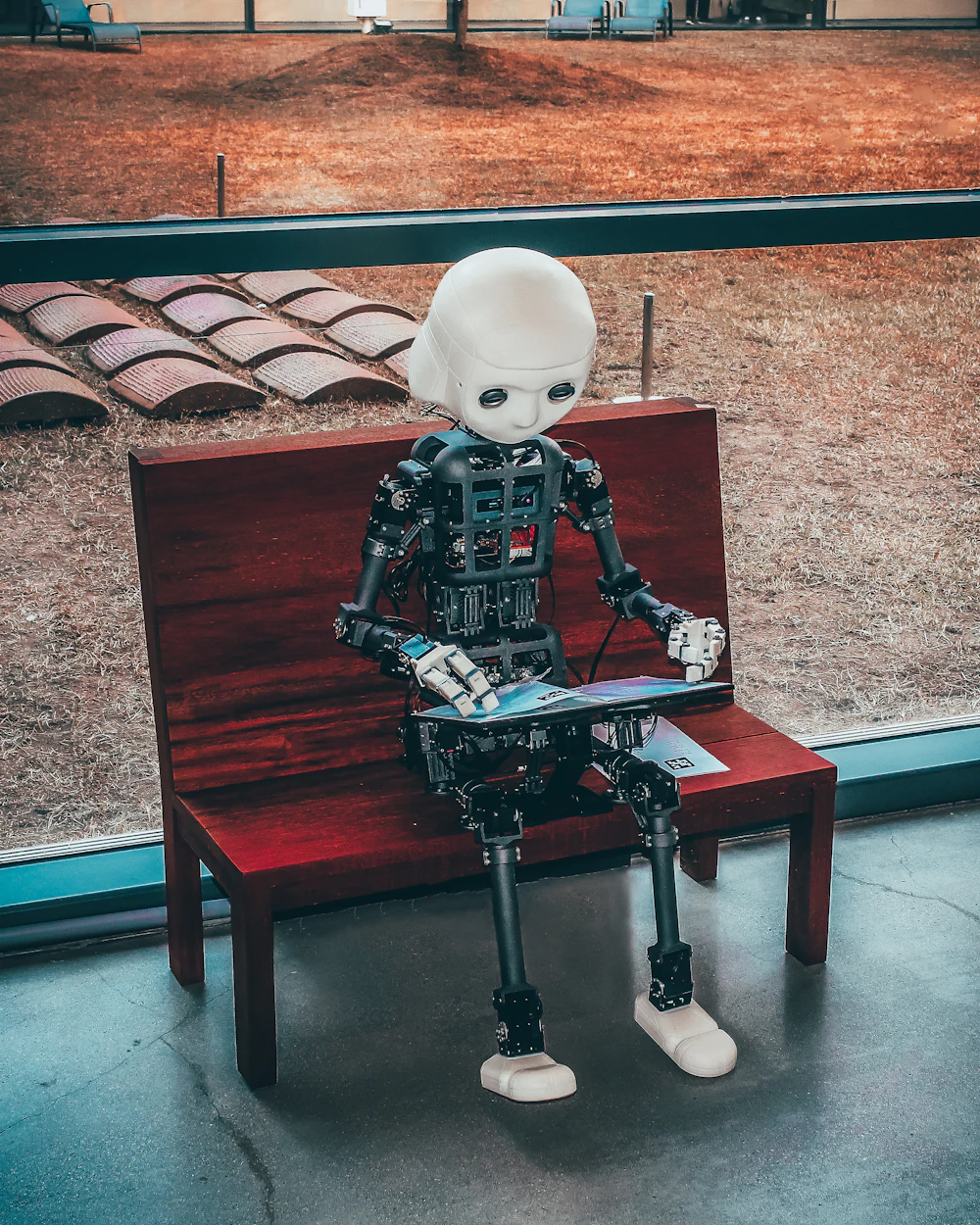

Don't mistake dimensionality reduction for simply deleting data. It's akin to compressing a high-resolution raw image into a standardized format without visible quality loss. There are two main approaches:

A. Feature Selection

This method involves selecting only the most dominant variables from the original dataset.

- Concept: "Pick the Best 11 Players."

- Example: When predicting house prices, discard 'Owner's Name' and select only 'Square Footage' and 'Location'.

- Pros: High Explainability since the meaning of the original data is preserved.

B. Feature Extraction

This creates entirely new 'Latent Variables' by mathematically combining existing ones.

- PCA (Principal Component Analysis): Finds the axes with the highest variance and projects data linearly.

- t-SNE & UMAP: Maps high-dimensional space to lower dimensions while preserving local distances (topology). Essential for visualizing complex non-linear structures.

3. 2026 Trends: The Era of LLMs and Vector DBs

With the advent of Generative AI, the status of dimensionality reduction has shifted completely. This is because 'Embeddings', which convert text or images into numbers, are themselves high-dimensional vectors.

Recently, dimensionality reduction techniques combined with Quantization have become essential infrastructure for efficiently searching millions of vectors in RAG (Retrieval-Augmented Generation) systems. Now, dimensionality reduction is not just preprocessing; it is a core business strategy to reduce cloud costs.

4. Real-World Applications

Reducing dimensions of sensor data in manufacturing makes it much easier to identify 'Outliers' that deviate from normal patterns.

Compresses user preferences into low-dimensional vectors to calculate similar content in real-time (e.g., Netflix, YouTube).

Projecting 100-dimensional data onto 2D screens allows us to visually confirm insights like "Clusters of High-Spending Customers."

💡 Tech Leader's Advice

"Reduction isn't always the answer."

Excessive reduction causes Information Loss, which can degrade model performance. In practice, always check the Explained Variance Ratio.

Golden Rule: Aim to preserve at least 95% of the original information. For visualization purposes, UMAP is currently preferred over t-SNE due to its speed and better preservation of global structures.

Conclusion: The Power to Control Complexity

Dimensionality reduction is the 'Invisible Hero' hidden behind flashy AI models. At a time when quality matters more than quantity, the ability to handle high-dimensional complex data will be a core competency for data scientists and engineers. How is your data pipeline doing? Strip away the unnecessary dimensions, and face the essence of your data today.